Supercomputing Frontiers Europe 2018

Keynote speakers

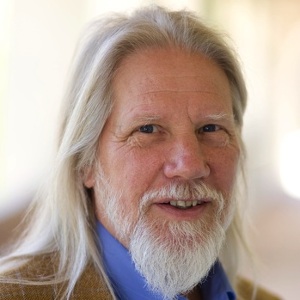

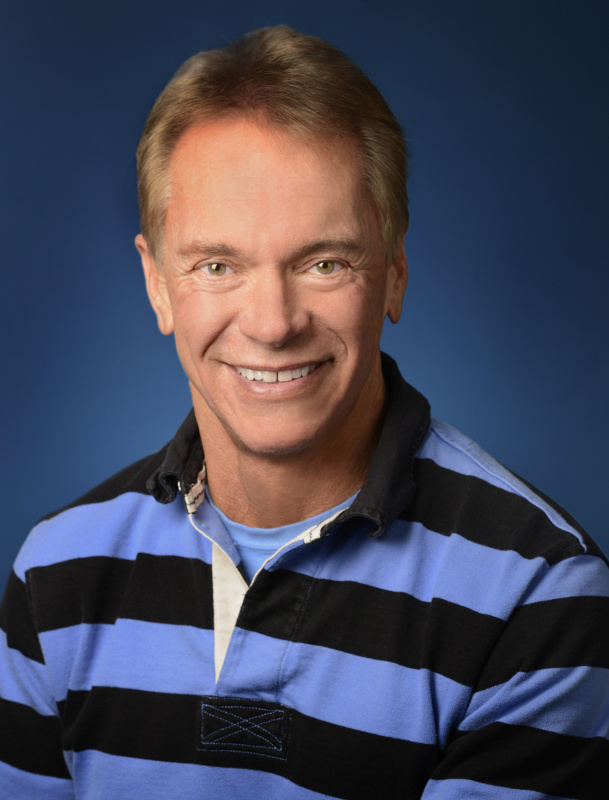

Whitfield Diffie

Title: Supercomputing Security or Supercomputing for Security

Bailey Whitfield ‘Whit’ Diffie (born June 5, 1944) is an American cryptographer and one of the pioneers of public-key cryptography. Diffie and Martin Hellman’s 1976 paper New Directions in Cryptography introduced a radically new method of distributing cryptographic keys, that helped solve key distribution—a fundamental problem in cryptography. Their technique became known as Diffie–Hellman key exchange. The article stimulated the almost immediate public development of a new class of encryption algorithms, the asymmetric key algorithms.

After a long career at Sun Microsystems, where he became a Sun Fellow, Diffie served for two and a half years as Vice President for Information Security and Cryptography at the Internet Corporation for Assigned Names and Numbers (2010–2012). He has also served as a visiting scholar (2009–2010) and affiliate (2010–2012) at the Freeman Spogli Institute’s Center for International Security and Cooperation at Stanford University.

Together with Martin Hellman, Diffie won the 2015 Turing Award, widely considered the most prestigious award in the field of computer science. The citation for the award was: “For fundamental contributions to modern cryptography. Diffie and Hellman’s groundbreaking 1976 paper, ‘New Directions in Cryptography’, introduced the ideas of public-key cryptography and digital signatures, which are the foundation for most regularly-used security protocols on the internet today.

source: https://en.wikipedia.org/wiki/Whitfield_Diffie.

Dimitri Kusnezov

Chief Scientist & Senior Advisor to the Secretary, National Nuclear Security Administration, Department of Energy

Tentative Title: Precision Medicine as an Accelerator for Next Generation Supercomputing

Dr. Kusnezov received A.B. degrees in Physics and in Pure Mathematics with highest honors from UC Berkeley. Following a year of research at the Institut fur Kernphysik, KFA-Julich, in Germany, he attended Princeton University earning his MS in Physics and Ph.D. in theoretical physics. At Michigan State University, he conducted postdoctoral research and then became an Instructor. He joined the faculty of Yale University as an assistant professor in theoretical physics, becoming an associate professor and has served as a visiting professor at numerous universities around the world. Dr. Kusnezov has published over 100 articles and edited 2 books. After more than a decade at Yale, he left academia to pursue federal service at the National Nuclear Security Administration and is a member of the Senior Executive Service. He has served in multiple positions within the NNSA, was nominated by the President to serve in the National Nuclear Security Administration, and he currently serves as Chief Scientist.

Karlheinz Meier

Professor of Physics at Heidelberg University

Neuromorphic computing – From biology to user facilities

Karlheinz Meier was appointed full professor of physics at Heidelberg University in 1992, where he co-founded the Kirchhoff-Institute for Physics. He has more than 25 years of experience in experimental particle physics, including design of a large-scale electronic data processing system that enabled the discovery of the Higgs Boson in 2012. Around 2005 he became interested in large-scale electronic implementations of brain-inspired computer architectures. His group pioneered several innovations in the field. He led 2 major European initiatives, FACETS and BrainScaleS. In 2009 he was one of the initiators of the European Human Brain Project (HBP) that was approved in 2013. In the HBP he leads the subproject on neuromorphic computing with the goal of establishing brain-inspired computing paradigms as research tools for neuroscience and generic hardware systems for cognitive computing. In the HBP he is a member of the project directorate and vice-chair of the science and infrastructure board.

Abstract

Neuromorphic computing holds the promise to transfer computational advantages from biological brains to artificial silicon or new material based systems. Advantages include energy efficiency, fault tolerance and, most importantly, the ability to learn continuously from unstructured data. Recent advances in artificial intelligence are based on ANNs which represent extreme simplifications of biological architectures. In particular they ignore the time domain of neural signaling which plays a key role for learning and development of neural systems. In the lecture I will deliver an overview of novel brain-inspired neuromorphic hardware architectures. Particular emphasis will be given to the role of biological principles like spike based plasticity and active dendritic trees for computation. I will also discuss the recent releases of neuromorphic systems for general use and the opportunities they offer to advance both, neuroscience and machine learning.

Thomas Sterling

Professor of Electrical Engineering

Director, Center for Research in Extreme Scale Technologies (CREST)

Dr. Thomas Sterling holds the position of Professor of Intelligent Systems Engineering at the Indiana University (IU) School of Informatics and Computing as well as the Chief Scientist and Associate Director of the Center for Research in Extreme Scale Technologies (CREST). Since receiving his Ph.D from MIT in 1984 as a Hertz Fellow Dr. Sterling has engaged in applied research in fields associated with parallel computing system structures, semantics, and operation in industry, government labs, and academia. Dr. Sterling is best known as the “father of Beowulf” for his pioneering research in commodity/Linux cluster computing. He was awarded the Gordon Bell Prize in 1997 with his collaborators for this work. He was the PI of the HTMT Project sponsored by NSF, DARPA, NSA, and NASA to explore advanced technologies and their implication for high-end system architectures. Other research projects included the DARPA DIVA PIM architecture project with USC-ISI, the Cray Cascade Petaflops architecture project sponsored by the DARPA HPCS Program, and the Gilgamesh high-density computing project at NASA JPL. Thomas Sterling is currently engaged in research associated with the innovative ParalleX execution model for extreme scale computing to establish the foundation principles to guide the co-design for the development of future generation Exascale computing systems by the end of this decade. ParalleX is currently the conceptual centerpiece of the XPRESS project as part of the DOE X-stack program and has been demonstrated in proof-of-concept in the HPX runtime system software. Dr. Sterling is the co-author of six books and holds six patents. He was the recipient of the 2013 Vanguard Award. In 2014, he was named a fellow of the American Association for the Advancement of Science.

Invited speakers

Tobias Becker

Head of MaxAcademy

Maxeler Technologies, USA

Computing with Data Flow Engines: The Next Step for Supercomputing

Dr. Tobias Becker is the Head of MaxAcademy at Maxeler Technologies where he coordinates various research activities and Maxeler’s university program. Before joining Maxeler he been has held positions as a researcher in the Department of Computing at Imperial College London, and at Xilinx, Inc. He received a Ph.D. degree in Computing from Imperial College London and a Dipl. Ing. degree in Electrical Engineering from the Technical University of Karlsruhe (now KIT). His research work covers topics in reconfigurable computing, custom accelerators, self-adaptive systems, low-power optimisations, and financial applications.

Abstract

Data Flow Engines (DFEs) are the next generation of data processing technology beyond CPUs and GPUs. Their low power consumption and performance density address the challenges that the computing industry today faces because of stagnating performance gains in conventional CPUs. DFEs are a departure from the typical Von Neumann architecture. Instead of bringing data to be processed to the CPU, the processing occurs as the data flows through large parallel processing structures on the DFE chip. As a result, the bottlenecks of centralised processing, complex memory hierarchies and time-consuming data transfers are removed. The distributed processing architecture of DFEs supports the free flow of data through the system where data arrives at exactly the right time and location to be processed much like in an industrial assembly line. The integration of DFEs with large amount of memory enables data-centric processing where Databases and hard drives are only needed in the backend for long term storage. The benefits of Dataflow processing have been demonstrated across many application domains including machine learning, finance, security and scientific computations.

Bob Bishop

EMU Technology Inc., USA

In an era of big data, is it time to update scientific content, software code and hardware architecture in one fell swoop? — the advent of processor-in-memory architecture

Bob Bishop spent 40 years in the technical, engineering and scientific computing business, and was responsible for building and operating the international aspects of Silicon Graphics Inc., Apollo Computer Inc., and Digital Equipment Corporation. To accomplish this task, he lived with his family in five countries: USA, Australia, Japan, Germany and Switzerland. He was Chairman and CEO of SGI from 1999 to 2005.

Bishop has been involved in a wide range of global initiatives including the advisory boards for EU’s Human Brain Project, National ICT Australia (NICTA), Multimedia Super Corridor of Malaysia, University Tenaga Nasional (Uniten), and UCLA’s Laboratory for Neural Imaging (LONI). He is a Fellow of the Australian Davos Connection and an elected member of the Swiss Academy of Engineering Sciences.

Bishop earned a B.S. (First Class Honors) in mathematical physics from the University of Adelaide, Australia, an M.S. from the Courant Institute of Mathematical Sciences at New York University, and received his D.S. honoris causa from the University of Queensland.

In 2006, Dr. Bishop was awarded the NASA Distinguished Public Service Medal for his role in building simulation facilities that helped NASA’s space shuttle fleet return-to-flight after the 2003 Columbia disaster.

Bishop is Chairman & Founder of BBWORLD Consulting Services Sàrl and President & Founder of The ICES Foundation (International Centre for Earth Simulation), both Geneva-based organisations. He serves on the board of EMU Technology Inc..

Sownak Bose

Harvard-Smithsonian Center for Astrophysics, USA

Copernicus Complexio Simulations

Sownak Bose writes about himself: “I am an astrophysicist at the Harvard-Smithsonian Center for Astrophysics, where I am currently an ITC Fellow. Before this, I obtained my PhD in astrophysics from the Institute for Computational Cosmology in Durham University, preceded by an undergraduate Masters degree in Physics from the University of Oxford. My research involves the study of dark matter and dark energy, and how they influence the formation of structure in the Universe through the use of large cosmological simulations on supercomputers.”

Benoit Dupont de Dinechin

Kalray, France

Manycore Accelerators beyond GPU Architecture

Benoît Dupont de Dinechin is Chief Technology Officer of Kalray. He is the Kalray VLIW core main architect, and the co-architect of the Multi-Purpose Processing Array (MPPA) processors. Benoît also defined the Kalray software roadmap and contributed to its implementation. Before joining Kalray, Benoît was in charge of Research and Development of the STMicroelectronics Software, Tools, Services division, and was promoted to STMicroelectronics Fellow in 2008. Prior to STMicroelectronics, Benoît worked at the Cray Research park (Minnesota, USA), where he designed the software pipeliner of the Cray T3E production compilers. Benoît earned an engineering degree in Radar and Telecommunications from the Ecole Nationale Supérieure de l’Aéronautique et de l’Espace (Toulouse, France), and a doctoral degree in computer systems from the University Pierre et Marie Curie (Paris) under the direction of Prof. P. Feautrier. He completed his post-doctoral studies at the McGill University (Montreal, Canada) at the ACAPS laboratory led by Prof. G. R. Gao.

Abstract

High-end GPU processors implement a throughput-oriented architecture that has been highly successful for CPU acceleration in supercomputers and datacentres. GPUs can be characterized as manycore processors, that is, parallel processors where a large number of cores are distributed across compute units. Each GPU compute unit or «streaming multiprocessor» is composed of multi-threaded processing cores sharing a control unit, a local memory and a global memory hierarchy. However effective, GPU architecture entails significant limitations in the areas of expressiveness of programming environments, effectiveness on diverging parallel computations, and execution time predictability.

We discuss the architectural options and programming models of accelerators designed to address high-performance applications ranging from embedded to extreme computing. Similarly to GPU architectures, such accelerators comprise multiple compute units connected by on-chip global fabrics to external memory systems and network interfaces. Selecting compute units composed of fully programmable cores, coprocessors and asynchronous data transfer engines enable to match the acceleration performance and energy efficiency of GPU processors, while avoiding their limitations. This discussion is illustrated by the co-design of the 3rd-generation MPPA manycore processor for automated driving, and is related to the implication of Kalray in the Mont-Blanc 2020 and the European Processor Initiative projects that target exascale computing.

Robert “Bo” Ewald

D-Wave, USA

Robert “Bo” Ewald leads D-Wave’s international business as President and is responsible for global customer operations for the company. Mr. Ewald has a long history with other leading technology organizations, government projects, and industry efforts. He has experience in large and startup businesses having been the CEO of visualization and HPC leader Silicon Graphics Inc., President of supercomputing leader Cray Research, President and CEO of Linux pioneer Linux Networx and Executive Chairman of Perceptive Pixel, Inc. He started his career at the Los Alamos National Laboratory where he led the Computing and Communications Division. He has served on the boards of directors of both public and private companies and has participated in numerous government and industry panels and committees. He was appointed to the President’s Information Technology Advisory Council by both the Clinton and Bush administrations.

Nicola Ferrier

Computer Scientist & Fellow, Institute for Molecular Engineering & Senior Fellow, Computation Institute

Argonne National Laboratory, USA

Nicola Ferrier received her doctorate from Harvard University in 1992. After postdoctoral fellowships at Oxford University and Harvard, she joined the Department of Mechanical Engineering at the University of Wisconsin (UW)-Madison in 1996. She became an associate professor in 2003 and professor in 2009. She received the NSF CAREER award (1997) and the UW Vilas Associates Professorship (1999) and the UW Honored Instructor Award (2009). She joined the Mathematics and Computer Smaience Division at Argonne in 2013.

Ferrier’s research interests are in the use of computer vision (digital images) to control robots, machinery, and devices, with applications as diverse as medical systems, manufacturing, and projects that facilitate “scientific discovery” (such as her recent project using machine vision and robotics for plant phenotype studies).

Petros Koumoutsakos

Chair for Computational Science, Computational Science and Engineering Laboratory

ETH Zurich, Switzerland

(Super)Computing for all Humankind

Petros Koumoutsakos received his Diploma (1986) in Naval Architecture at the National Technical University of Athens and a Master’s (1987) at the University of Michigan, Ann Arbor. He received his Master’s (1988) in Aeronautics and his PhD (1992) in Aeronautics and Applied Mathematics from the California Institute of Technology. He conducted postodoctoral studies at the Center for Parallel Computing (Caltech, 1992-1994) and at the Center for Turbulence Research (Stanford U./NASA Ames, 1994-1997). He was appointed as Chair for Computational Science at ETH Zurich in 2000.

Petros has been elected Fellow of the American Society of Mechanical Engineers (ASME), the American Physical Society (APS) and the Society of Industrial and Applied Mathematics (SIAM). He is recipient the Advanced Investigator Award by the European Research Council (2013) and led the team that won the ACM Gordon Bell prize in Supercomputing (2013).

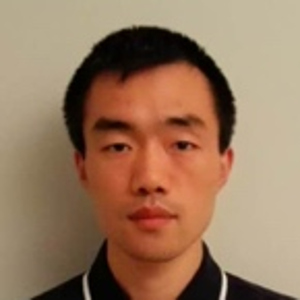

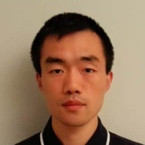

Baojiu Li

Institute for Computational Cosmology

Durham University, UK

Nonlinear partial differential equations, gravity, cosmology and

supercomputing

CAREER

- 2016—

- Reader in Theoretical Astrophysics, ICC, Department of Physics, Durham University

- 2014—2016

- Senior Lecturer in Theoretical Astrophysics, ICC, Department of Physics, Durham University

- 2011‐2014

- Lecturer in Theoretical Astrophysics, ICC, Department of Physics, Durham University,

Royal Astronomical Society Research Fellow, ICC, Department of Physics, Durham University - 2009‐2011

- Research Fellow (JRF) in Applied Mathematics, Queens’ College, University of Cambridge

Postdoctoral Fel low, Dept. of Appl ied Mathemat ics and Theoret ical Physics, Univ. of Cambridge

Postdoctoral Fellow, Kavli Institute of Cosmology Cambridge, University of Cambridge

EDUCATION

- 2006‐2009

- PhD, Appl ied Maths & Theoret ical Physics (DAMTP), Queens’ Col lege, University of Cambridge

- 2004‐2006

- MPhil, Physics, Chinese University of Hong Kong (CUHK), Hong Kong

- 2000‐2004

- BSc, Physics, Tsinghua University, Beijing, China

Ronald P. Luijten

Data Motion Architect and senior project leader DOME uDataCenter project

IBM Zurich Research Laboratory, Switzerland

Objective, innovation and impact of the energy-efficient DOME MicroDataCenter

Ronald P. Luijten, senior IEEE member, received his Masters of Electronic Engineering with honors from the University of Technology in Eindhoven, Netherlands in 1984. In the same year he joined the systems department at IBM’s Zurich Research Laboratory in Switzerland. He has contributed to the design of various communication chips, including PRIZMA high port count packet switch and ATM adapter chip sets, culminating in a 15-month assignment at IBM’s networking development laboratory in La Gaude, France as lead-architect, from 1994-95. He lead the OSMOSIS optical switch demonstrator project for the DOE in close collaboration with Corning, inc. from 2004 to 2007. His team also contributed the congestion control mechanism to the converged enhanced Ethernet standard and is worked on the network validation of IBM’s HPC systems. He currently manages the IBM DOME microDataCenter team. Ronald’s personal research interests are in datacenter architecture, design and performance (‘Data Motion in Data Centers’). He holds more than 25 issued patents, and has co-organized 7 IEEE conferences. Over the years (32), IBM has awarded Ronald with three outstanding technical achievement awards and a corporate patent award.

Abstract

The DOME MicroDataCenter, developed by IBM Zurich Research and ASTRON Netherlands Institute for Radio Astronomy, brings together the embedded and data-center computing worlds, resulting in the densest general-purpose computing capability with top energy-efficiency. I will describe this 5 year project and illustrate how we went from an initial idea through obtaining funding for our small team and to building innovative hard- and software. I explain how we used first-principles from physics to motivate key decisions, highlight some of the practical technical obstacles we needed to overcome and summarize key lessons we learnt along our way. Our result, which we are currently bringing to market through a new startup company, addresses the needs of edge-computing (analytics) for the Internet of Things.

Joe Mambretti

Director of International Center for Advanced Internet Research (iCAIR)

Northwestern University, USA

Title: Next Generation Software Defined Services and the Global Research Platform: A Software Defined Distributed Environment For High Performance Large Scale Data Intensive Science

Joe Mambretti is Director of International Center for Advanced Internet Research (iCAIR) at Northwestern University. He is also Director of MREN, a high-performance network interlinking organizations providing services in seven upper-midwest states. iCAIR, created in partnership with a number of major high-tech corporations, designs and implements large-scale services and infrastructure for data-intensive applications (metro, regional, national, and global). With its research partners, iCAIR has established multiple national and international network research testbeds that are used to develop new architecture and technology for dynamically provisioned communication services and networks, including those based on lightpath switching and 100G paths. He is also Director of the StarLight facility in Chicago; the Principal Investigator (PI) of an National Science Foundation (NSF) project to develop an international Software Defined Network Exchange (SDX); the PI of the NSF-funded International Global Environment for Network Innovations (iGENI); the PI of the NSF-funded StarWave, a multi-100G communications exchange facility; the PI of several research projects to create 100G services, network testbeds, and facilities; the PI of several national and international network testbeds; the PI of an NSF-funded GENI project that developed the world’s first SDX prototype; and, co-PI of the Chameleon NSFCloud testbed. He is the author of many articles and the co-editor of Grid Networks: Enabling Grids With Advanced Communications Technology, published by Wiley.

Matthieu Schaller

University of Leiden, Netherlands

Individual time-stepping in cosmological simulations: A challenge for strong scaling and domain decomposition algorithms

Dr. Schaller obtained his PhD at the Institute for Computational Cosmology in Durham (UK) where he worked of galaxy formation and cosmological simulations. In collaboration with experts in the school of computer science, he lead the development of the next-generation code named SWIFT. This new simulation software was designed from scratch to run on the largest HPC clusters using modern technologies to exploit the latest architecture changes. Some of these innovations will be presented in this talk. Dr. Schaller is now a VENI post-doctoral fellow at the University of Leiden (Netherlands) where he pursues the development of SWIFT and plans simulations for ESA’s future Euclid satellite mission.

Joanna Sułkowska

Assistant Professor, Principal Investigator at Centre of New Technology, Interdisciplinary Laboratory of Biological Systems Modelling

University of Warsaw, Poland

Tentative Title: Advanced theoretical techniques to overcome drug-resistant bacteria

Joanna I. Sulkowska, Ph. D and her group are interested in development of multi dimensional models for the analysis of energy landscape of proteins with complex structures, as proteins with non trivial topology; development of analytical methods as direct coupling analysis (DCA). Scientific interests: theoretical models of the energy landscape of proteins; analytical methods and bioinformatics tools to predict protein structures and folding mechanisms; mechanical properties of proteins, degradation and translation; protein ligand interactions, membrane proteins; applications of mathematical knot theory to proteins and nucleic acids.